At some point, you may want to cut out part of your video image. For example, the original video may not have been framed well, and you may want a tighter shot. Or there may be something objectionable in the shot that you want to remove. Cutting out this unwanted video is called cropping. If you're targeting video iPods, you also have to resize your video to 320×240, which is the resolution of the iPod video screen. Video-editing platforms allow you to do this, but in order to do it correctly without introducing any visual distortion, you must understand what an aspect ratio is.

Aspect ratiosThe aspect ratio is the ratio of the width to the height of a video image. Standard definition television has an aspect ratio of 4:3 (or 1.33:1). High definition TV has an aspect ratio of 16:9 (1.78:1). You've no doubt noticed that all the new HDTV-compatible screens are wider than standard TVs. When you're cropping and resizing video, it's critical to maintain your aspect ratio; otherwise, you'll stretch the video in one direction or another.

To better understand this, let's look at how NTSC video is digitized. The original signal is displayed on 486 lines. Each one of these lines, or rasters, is a "stripe" of continuous video information. When it is digitized, it is divided up into 720 discrete slices, each one of these slices is assigned a value, and the values are stored digitally.

However, when you display the digitized 720×486 video on a computer monitor, the video appears slightly wider than on a television, or looked at another way, the video seems a bit squished. People look a little shorter and stickier than usual, which in general is not a good thing. Why is this?

If you do the math, 720×486 is not a 4:3 aspect ratio. If you could zoom in and look really closely at the tiny slices of NTSC video that were digitized, they would be slightly taller than they are wide. But computer monitor pixels are square. So when 720×486 video is displayed on a computer monitor, it appears stretched horizontally. To make the video look right, you must resize the video to a 4:3 aspect ratio such as 640×480 or 320×240. This restores the original aspect ratio, and the image looks right.

Note Those of you paying attention may be wondering about standard definition television displayed on the new widescreen models. The simple answer is that most widescreen TVs stretch standard television out to fill the entire 16:9 screen, introducing ridiculous amounts of distortion. Why that is considered an improvement is anyone's guess.

With the availability of HDV cameras, some of you may be fortunate enough to be working in HDV, which offers a native widescreen format. If so, you'll be working with a 16:9 aspect ratio such as 1080×720 or 1920×1080. Regardless of the format you're working in, the key is to maintain your aspect ratio.

CroppingIf you decide you need to do some cropping, the key is to crop a little off each side to maintain your aspect ratio (see Figure 1). Some video-editing platforms offer to maintain the aspect ratio automatically when you're performing a crop, which is very handy. However, many of the encoding tools require that you manually specify the number of pixels you want shaved from the top, bottom, right, and left of your screen. If that's the case, then you have to do the math yourself and be sure to crop the right amounts from each side.

Figure 1: Be careful with your aspect ratio when you crop.

Figure 1: Be careful with your aspect ratio when you crop. As an example, let's say that you needed to shave off the bottom edge of your video. You could estimate that you wanted to crop off the bottom 5 percent of your screen, which would mean 24 lines of video. Assuming you were working with broadcast video, to maintain your aspect ratio, you'd need to crop a total of:

24 * 720 / 486 = 35.5 or 36 pixelsSo you'd need to cut 36 pixels off the width to maintain your aspect ratio. You could do this by taking 18 pixels off either side, or 36 pixels off one side. It doesn't matter; where you crop is dependent on what is in your video frame. Of course, this is assuming that you're working with NTSC video. The math varies slightly if you've already resized the video to a 4:3 aspect ratio such as 640×480.

One thing to bear in mind is that some codecs have limitations on the dimensions they can encode. Codecs divide the video frame into small boxes known as macroblocks. In some cases, the smallest macroblock allowed is 16×16 pixels, which means that your video dimensions must be divisible by 16. Most modern codecs allow macroblocks to be 8×8 pixels, or even 4×4 pixels. The great thing about 320×240 is that it works for even the largest macroblocks.

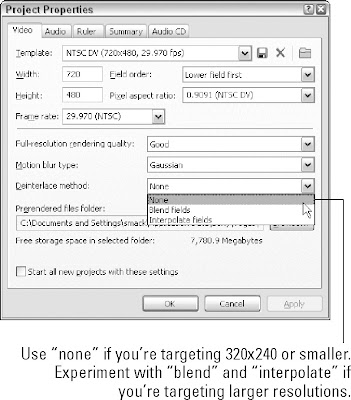

ResizingResizing is pretty easy; just make sure you're resizing to the correct aspect ratio.